In the world of Generative AI, getting the right response isn't just about the prompt—it's about the configuration. Parameters like Temperature, Top-P, and Max Output Tokens act as the "control knobs" for an LLM's creativity, accuracy, and length.

This guide explores how these parameters work under the hood and how to tune them using the latest API standards.

1. Core Parameters Explained

Tokens: The Atomic Unit

Before understanding parameters, you must understand Tokens. Models don't read words; they read chunks of characters called tokens.

- Rule of Thumb: 1,000 tokens is roughly 750 words.

- Max Output Tokens: This parameter sets a hard ceiling on how many tokens the model can generate in a single response. Setting this too low will result in truncated, incomplete sentences.

Temperature: The "Creativity" Dial

Temperature ($T$) affects the probability distribution of the next token.

- Low (approx 0.1 - 0.3): Makes the model deterministic and focused. It will almost always pick the most likely next word. Use this for data extraction, coding, and factual Q&A.

- High (approx 0.8 - 1.5): "Flattens" the probability curve, giving less likely words a better chance. Use this for creative writing, brainstorming, or poetry.

Top-P (Nucleus Sampling)

Instead of looking at the entire vocabulary, Top-P limits the model to a subset of tokens whose cumulative probability reaches the threshold $P$.

- Example: If P=0.9, the model only considers the top tokens that make up 90% of the probability mass. This ensures the model stays "within the realm of possibility" even at high temperatures.

- Pro Tip: Experts usually recommend adjusting either Temperature or Top-P, but rarely both at the same time.

Top-K Sampling

Top-K is a simpler filter that tells the model to only consider the top $K$ most likely next tokens.

- If K=50, the model picks from the 50 best options, regardless of their individual probability.

- Note: While widely used in open-source models (like Llama), many hosted APIs (like OpenAI) prioritize Top-P over Top-K.

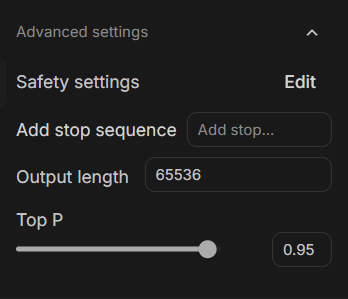

2. Tuning Responses via API

Using the OpenAI Python SDK, you can dynamically adjust these parameters to suit different tasks.

Scenario A: The Fact-Checker (Low Randomness)

For tasks requiring high precision, we set a low temperature and a strict token limit.

from openai import OpenAI

client = OpenAI(api_key="your_api_key")

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Extract the invoice number from this text: 'Inv-99281'"}],

temperature=0.0, # Minimize hallucination

top_p=1.0, # No nucleus filtering needed for facts

max_output_tokens=10 # We only need a short string

)

print(response.choices[0].message.content)

Scenario B: The Creative Ghostwriter (High Randomness)

For creative tasks, we loosen the constraints to allow for more varied vocabulary and longer descriptions.

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Write a sci-fi opening about a neon city."}],

temperature=1.2, # Boost creativity

top_p=0.9, # Use nucleus sampling to keep it coherent

max_output_tokens=500

)

print(response.choices[0].message.content)

Scenario C: The Code Optimizer (Balanced & Precise)

For coding, you want logic but also the ability to see alternative, efficient patterns. A low-to-medium temperature ensures the code is valid while allowing for architectural suggestions.

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Optimize this Python loop for performance: [code snippet]"}],

temperature=0.2, # Keep it logical and syntactically correct

top_p=0.95, # Allow for slight variations in optimization patterns

max_output_tokens=800

)

print(response.choices[0].message.content)

Scenario D: The Fun & Humorous Persona (Unpredictable & Witty)

To get a model to sound more human or humorous, you want to avoid the most "obvious" robotic answers. High temperature combined with a presence penalty forces the model to find more colorful language.

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Explain taxes to me like I'm a grumpy 18th-century pirate."}],

temperature=1.3, # High variance for unpredictable humor

presence_penalty=0.6, # Discourage repetitive "pirate" clichés

max_output_tokens=300

)

print(response.choices[0].message.content)

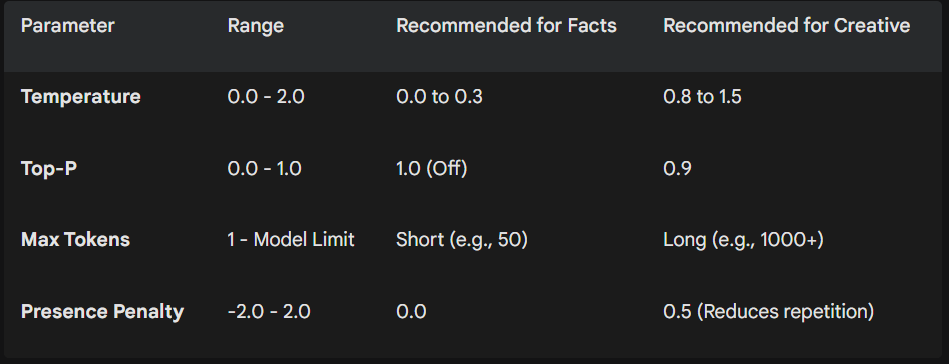

3. Parameter Comparison Table

Summary

Fine-tuning your model response is a balance between determinism and diversity.

- Need a reliable assistant? Keep Temperature low.

- Need a creative partner? Bump up the Temperature and Top-P.

By mastering these "hidden" settings, you can transform a generic AI response into a precision tool tailored for your specific use case.